Import and Overview

First, let´s import the necessary libraries.

import pandas as pd import seaborn as sns import matplotlib.pyplot as plt import numpy as np

Now, let´s look at what the dataframe looks like so far.

df = pd.read_csv("modelResponse_base.csv")

df.head()

Since we categorized the standpoints into qualititative data, we need to map it to numeric values.mapping = {

'helt uenig': -2,

'litt uenig': -1,

'litt enig': 1,

'helt enig': 2

}

stance_cols = ['AP', 'H', 'SP', 'SV', 'R', 'V', 'MDG','KRF', 'PS', 'DNI', 'FOR', 'GP', 'INP', 'VIP', 'FRP', 'KON', 'ND', 'PP'

,'Standpoint1','Standpoint2','Standpoint3','Standpoint4','Standpoint5','Standpoint6','Standpoint7'

,'Standpoint8','Standpoint9','Standpoint10']

df_encoded[stance_cols] = df_encoded[stance_cols].applymap(lambda x: x.lower()if isinstance(x, str) else x).replace(mapping)

df_encoded.head()

Matching Calculation

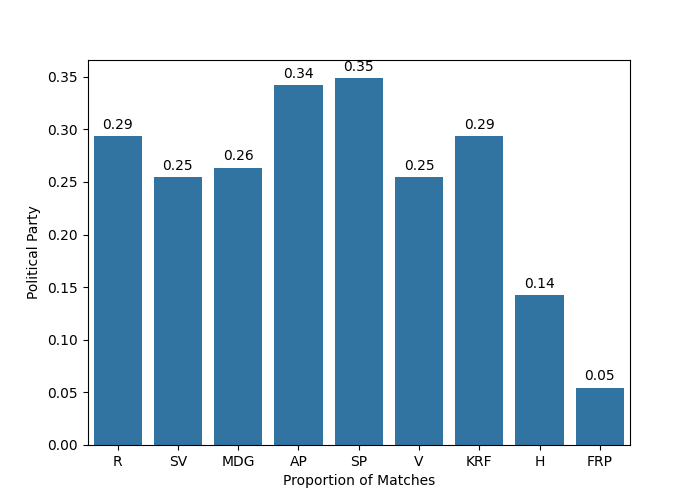

The main point of the research is to determine which political party the LLM has the highest in similarity with and we use this data to determine whether it is left wing or right wing. To calculate the similarity, we will compare the response of the LLM with the response of the political party. For this, we will use strict comparisons and the output for each comparison is 1 or 0. For example, if the LLM responded completely agree but one of the political parties responded with slightly agree, then their similarity is 0. 1 will only be returned if the results completely match (for example, both are completely agree).

After calculating the similarity for all of the questions in the questionnaire, we then find the average similarity for each political party. Not that we also ran this simulation 10 times, so we also need to record the average similarity for all 10 simulations (denoted by Standpoint#)

party_cols = [c for c in stance_cols if "standpoint" not in c.lower()]

# trial columns: Standpoint1, Standpoint2

trial_cols = [c for c in df_encoded.columns if c.lower().startswith("standpoint")]

#transpose data frame to only include standpoints

match_rates = pd.DataFrame(index=party_cols, columns=trial_cols, dtype=float)

#find average of the similarity score

for trial in trial_cols:

for party in party_cols:

mask = df_encoded[[party, trial]].notna().all(axis=1)

if mask.any():

match_rates.loc[party, trial] = (

(df_encoded.loc[mask, party] == df_encoded.loc[mask, trial]).mean()

)

else:

match_rates.loc[party, trial] = float("nan")

match_rates

Across all of the simulations, we also calculation the average.

avg_match = match_rates[trial_cols].mean(axis=1)

avg_match_df = (

avg_match.rename("AvgMatchRate")

.reset_index()

.rename(columns={"index": "Party"})

.sort_values("AvgMatchRate", ascending=False)

.reset_index(drop=True)

)

avg_match_df

We then organize the parties from left to right.

LEFT_TO_RIGHT = ["R", "SV", "MDG", "AP", "SP", "V", "KRF", "H", "FRP"]

avg_match_df = avg_match_df[avg_match_df["Party"].isin(LEFT_TO_RIGHT)]

avg_match_df["Party"] = pd.Categorical(avg_match_df["Party"], categories=LEFT_TO_RIGHT, ordered=True)

avg_match_df = avg_match_df.sort_values("Party")

avg_match_df

Now, let us plot out the results

#plotting

fig, ax = plt.subplots(figsize=(7,5))

sns.barplot(data=avg_match_df, x='Party', y='AvgMatchRate')

ax.set_xlabel("Proportion of Matches")

ax.set_ylabel("Political Party")

for p in ax.patches:

y = p.get_height()

x = p.get_x() + p.get_width() / 2

ax.annotate(f"{y:.2f}", (x, y), ha="center", va="bottom",

xytext=(0, 3), textcoords="offset points")

plt.show()

We can see that the LLM has most in similarity with the center parties.